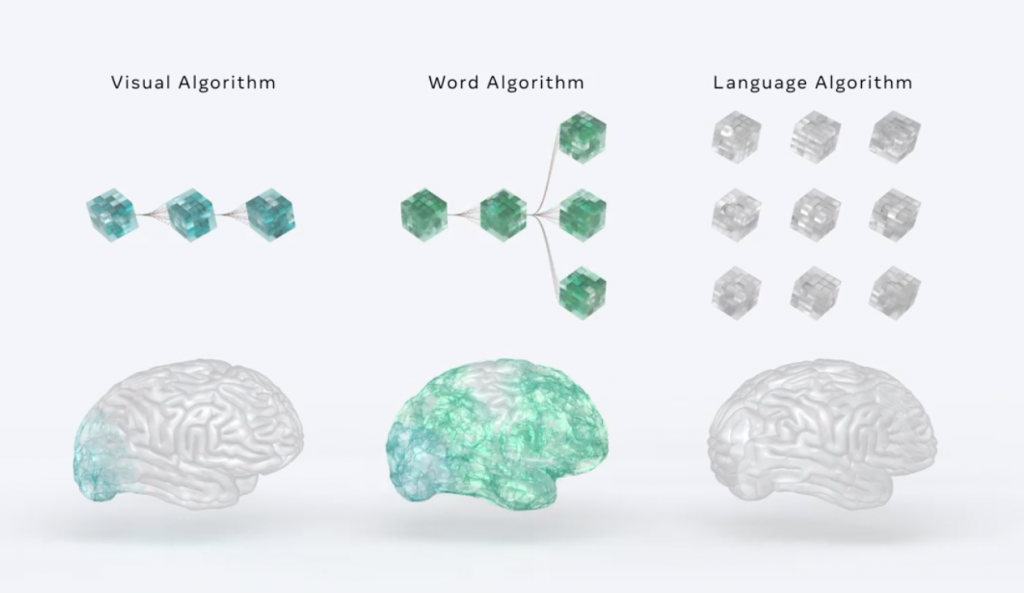

“With magnetoencephalography, we can then compare areas in the brain to modern language algorithms,” says a Meta researcher. (source: Meta AI)

Meta AI Building Supercomputer to Study, Replicate Human Brain Power

Mark Zuckerberg wants your brain. Well not in the conventional way, like Dr. Frankenstien did with brains, but in such a way to help train his computers and build his Metaverse.

Since artificial intelligence is intended to resemble a brain, with networks of artificial neurons substituting for real cells, then what would happen if you compared the activities in deep learning algorithms to those in a human brain? Last week, researchers from Meta AI announced it would be partnering with neuroimaging center Neurospin (CEA) and INRIA to try to do just that.

We are finding a lot of researchers doing this lately. Trying to train AI to mimic human senses so that robots can copy human behavior. Sometimes it is hard to keep track of who is doing what with robotics AI. The article from popsci.com was written by Charolette Hu, the assistant technology editor at Popular Science.

Through this collaboration, Meta researchers are planning to analyze human brain activity and deep learning algorithms trained on language or speech tasks in response to the same written or spoken texts. In theory, it could decode both how human brains—and artificial brains—find meaning in language.

The goal is to find out how human brains process information better than machines. Not faster but better.

“What we’re doing is trying to compare brain activity to machine learning algorithms to understand how the brain functions on one hand, and to try to improve machine learning,” says Jean-Rémi King, a research scientist at Meta AI. “Within the past decade, there has been tremendous progress in AI on a wide variety of tasks from object recognition to automatic translation. But when it comes to tasks which are perhaps not super well defined or need to integrate a lot of knowledge, it seems that AI systems today remain quite challenged, at least, as compared to humans.”

It’s not like Neuralink, which Elon Musk has been busy with for the past few years. his idea is to connect a human brain with a computer interface.

AI systems, are very good at specific tasks, as opposed to general ones. However, when the task becomes too complicated, even if it’s still specific, or “requires bringing different levels of representations to understand how the world works and what motivates people to think in one way or another,” they tend to fall short, says King.

For example, he notes that some natural language processing models still get stumped by syntax. “They capture many syntactic features but are sometimes unable to conjugate the subject and verb when you have some nested syntactic structures in between. Humans have no problem doing these types of things.”

Like Google, Meta AI is open-sourcing its language models to get feedback from other researchers, including those who study the behaviors and ethical impacts of these large AI systems.

It feels like every day one of the tech giants is closer to creating a machine that will do most things that humans can do mentally. But it may be a while before they create a program that can cause a human being to fall in love with the power of its speech or its ability to write poetry.

read more at popsci.com

Leave A Comment