Diverse Nazi Image Among Errors Made by Google’s Gemini Image Generator

Diverse Nazi Image Among Errors Made by Google’s Gemini Image Generator

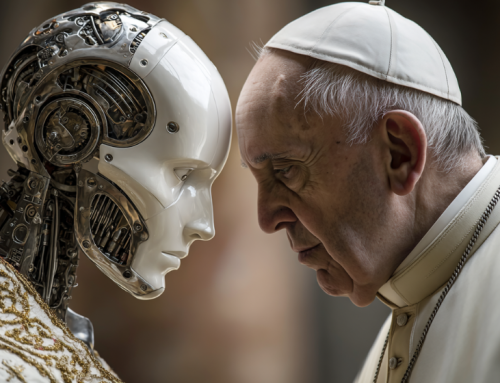

Google has apologized for the inaccuracies observed in the historical image generation by its AI tool, Gemini. The tool had been criticized for depicting white figures, such as the Founding Fathers of the U.S., and groups like Nazi-era German soldiers as people of color, which may have been an overcorrection to longstanding racial bias issues in AI.

Gemini, which Google unveiled earlier this month, is an AI platform that offers image generation, matching offerings from competitors like OpenAI. However, there have been concerns raised on social media about its failure to produce historically accurate results in its bid to promote racial and gender diversity.

The controversy around Gemini has been largely fueled by Fox News Digital and right-wing figures who view Google as a liberal tech company. They argued that Gemini was unwilling to acknowledge the existence of white people and produced images that overwhelmingly represented people of color. These critics used images of historical groups or figures like the Founding Fathers, in which the AI-generated people were predominantly non-white, to support their claims.

Google hasn’t referenced specific images it believes were erroneous but confirmed it is working to improve Gemini’s depictions. Given the bias inherent in generative AI, it’s plausible Gemini was attempting to boost diversity. Image generators often amplify stereotypes due to their reliance on large corpora of pictures and written captions.

While some critics of Google defended its goal of portraying diversity, they criticized Gemini’s lack of nuance in doing so. They pointed out that while a diverse depiction of an American woman is appropriate, it wouldn’t be historically accurate for a 1943 German soldier.

Currently, Gemini appears to be refusing to complete some image-generation tasks. Requests for images of Vikings or German soldiers from the Nazi era, or an American president from the 1800s, were denied. However, some historical requests continue to misrepresent the past, resulting in Google’s “inaccuracy” claim.

The controversy surrounding Gemini underscores the ongoing challenge of ensuring AI systems accurately represent diversity without distorting historical facts.

read more at theverge.com

Leave A Comment