Russian-Based Service Already Altered 100K Photos of Women

Deepfakes are going viral as a free online service hosted by a Russian company has enabled users to submit full-body photos that AI convert into nude images of women only, according to a story that the Washington Post broke this week.

Sensity, an Amsterdam-based cybersecurity start-up, first reported the unethical service to the Post, noting that the service allows people to place new orders through an automated “chatbot” on the encrypted messaging app Telegram.

Sensity’s CEO Giorgio Patrini told the Post that it is a “dark shift” in how the technology is being used.

“The fact is that now every one of us, just by having a social media account and posting photos of ourselves and our lives publicly, we are under threat,” Patrini said in an interview. “Simply having an online persona makes us vulnerable to this kind of attack.”

The biggest user base is in Russia, according to an interview with an anonymous employee for the company, though members also originate from the United States and across Europe, Asia and South America.

New users can create fake nudes for free, but then are “encouraged” to pay

“A beginners’ rate offers new users 100 fake photos over seven days at a price of 100 Russian rubles, or about $1.29. ‘Paid premium’ members can request fake nude photos be created without a watermark and hidden from the public channel,” the Post reported.

While deepfakes initially focused on celebrities, adding women’s heads to pornography videos, for instance, those in the public database are typically unknown, “everyday workers, college students and other women, often taken from their selfies or social media accounts on sites like TikTok and Instagram.”

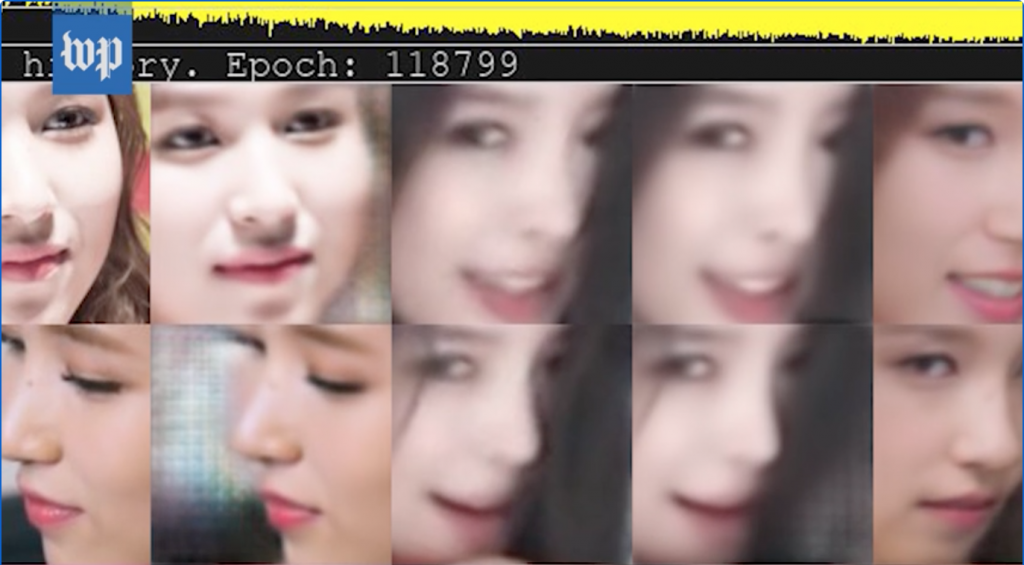

Image of a face used for deepfake manipulation in a Washington Post video on a story about public access to creating fake nudes. (Source: Washington Post)

Several women professors interviewed for the story discussed how its use is damaging and misogynistic.

Britt Paris, an assistant professor at Rutgers University who has researched deepfakes, said manipulators defend themselves as providing lighthearted entertainment.

“These amateur communities online always talk about it in terms of: ‘We’re just … playing around with images of naked chicks for fun,’ ” Paris said. “But that glosses over this whole problem that, for the people who are targeted with this, it can disrupt their lives in a lot of really damaging ways.”

read more at washingtonpost.com

Leave A Comment