UC Berkely, Adobe Research Leads to AI Detection of Altered Photos

Photoshop artists have airbrushed female fashion models’ photos for decades in magazines. Most readers already expect computer perfection in images of their faces and bodies. Now, however, anyone can look glam without paying for the expensive studio shots. High-powered Photoshop tools make it possible for even novice users to slim faces, remove wrinkles and upgrade anyone’s headshot with ease.

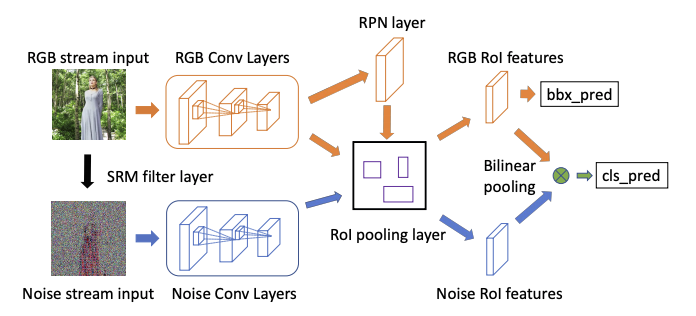

Diagram of how the Photoshop detection program works.

Adobe, Photoshop’s parent company, now wants to enable people to discover the deception through an AI program that detects any artificial changes, in trying to address “fake news,” according to a story in MIT Technology Review. Adobe worked with researchers at UC Berkeley to develop the program, which trained on thousands of internet photos scanned by the computer.

The program was able to correctly identify edited faces 99% of the time, compared with a 53% success rate for humans, according to the company. Photoshop’s Face Aware Liquify tool helps artists change facial expressions, one of the ways of deceiving people on social media in memes.

In a time when “deepfakes” are readily created showing figures who are not real, Adobe’s tool may be the key to counter that. According to a story on PetaPixel.com, Adobe researcher Vlad Morariu has focused on detecting image manipulation as a part of the government-sponsored DARPA Media Forensics program. Morariu wrote a research paper on his work that can be read by clicking here.

DigitalTrends.com breaks down exactly how it works:

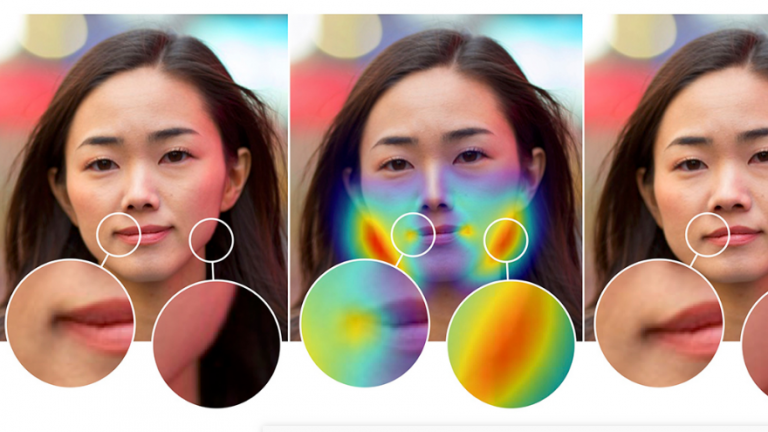

“To train a system to recognize a faked photo within a matter of seconds, the team fed the software thousands of manipulated images in order to help train the computer to spot photo trickery. The software looks at a handful of different clues… One method is to look for the slight differences in the red, green and blue color channels from altered pictures.

The second method the program uses for finding fakes is to generate a noise map of the image. The noise or grain that a camera captures, which is more obvious at high ISOs but appears in all images, has a unique pattern to each camera sensor. Because of that unique pattern, objects that have been added from another image will pop in that noise map. Using both techniques together helped improve the program’s accuracy.”

The purpose of the AI will be to help spot fakes, especially ones created at the expense of accuracy and truth, to manipulate viewers on social media sites.

Leave A Comment