Google Seeks to Help Computers Understand ‘Atoms’ of Human Activity

In October, Google’s Machine Perception Research organization announced the release of a large dataset of video segments designed to help neural networks better train abilities to recognize and interpret human behavior. Google –which owns the YouTube video service as well as the eminent AI research group DeepMind— named the project AVA, or “atomic visual actions,” and seeks as per the name to produce a diverse data set of short clips highlighting basic–hence, “atomic”–human activities.

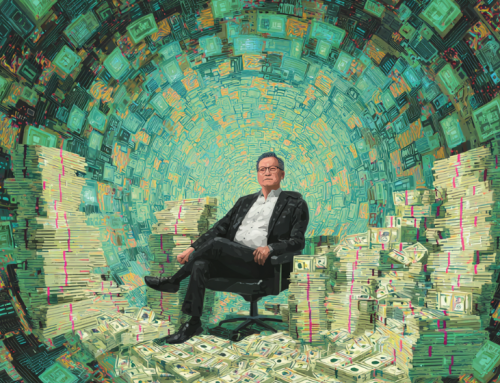

An example of AVA clips showing specific “atomic” actions indicated by red boxes. Images via Google, featuring video from this source.

In a process further described in a corresponding research paper on the project, AVA is derived from long-from video content sourced directly from films and television series featured on YouTube, chosen to reflect a wide variety of human actions as well as a diversity in the race and genders of the humans present in the clips. Google then further split this collection of video content into the more than 57,000 3-second long, non-overlapping clips that comprise the dataset. Each 3-second clip was then precisely analyzed and annotated to highlight the actions being performed by the individual(s) in the scene from actions across 80 categories. In total, AVA’s clip database features 96,000 labeled individuals performing a grand total of 210,000 actions.

An small subset showing the most common of AVA’s specific atomic action labels. Image via Google.

While computer vision networks–such as Google’s own famous Inception–have become adept at identifying objects in images and videos thanks to vast benchmark datasets for object recognition, AVA seeks to lay the groundwork for neural networks that can be better trained to recognize human actions, which “are, by nature, less well-defined than objects in videos, making it difficult to construct a finely labeled action video dataset,” according to Google.

AVA’s potential academic and commercial applications are limited only to the imagination, and such a diverse and large amount of data on real-time human activities will prove undoubtedly vital in any number of applied projects, from better automated content analysis and summary of videos, to improved computer vision systems in robots that are better able to judge human actions, reactions, and sentiments. According to the AVA team, the long-term goal of the project is “to enable machines to achieve human-level intelligence in sensory perception” and ultimately “imbu[e] computers with ‘social visual intelligence’–the ability to perceive what humans are doing, what might they do next, and what they are trying to achieve.”

Leave A Comment