Five Steps Forward To General AI

When Google DeepMind’s AlphaGo AI beat expert player Lee Sedol in the ancient game of Go in 2016, the victory came as a shock to many, with some commentators not expecting that a computer would best a human at Go for years or even decades to come. A moment as pivotal in the public sphere as Deep Blue’s historic victory of chess champion Gary Kasparov in 1997, AlphaGo’s triumph over Sedol (and numerous subsequent victories) was seen as a revolutionary milestone in AI development. Go is so complex and its combinations of moves so vast that some AI experts (though not all, importantly) speculated that an AI that could grasp Go was well on the way to developing more general qualities of intelligence and, soon to follow, the birth of truly intelligent AI capable of meeting and exceeding human potential.

But while AlphaGo has quietly advanced in the past year (most notably with last week’s announcement of AlphaGo Zero, an even more perfected Go bot trained entirely on its own sans human intervention), the world hasn’t yet been overtaken by Terminator killer bots or been turned into a galactic paperclip factory a la Bostrom’s famous philosophical exercise in AI safety, and so it seems we can rest easy knowing that the advent of general AI is not right around the corner.

But AlphaGo’s victory (especially with the development of AlphaGo Zero) does represent a significant skip down the path toward Artificial General Intelligence, explained in a brisk but informative article featured on the Huffington Post about how close we are to AI.

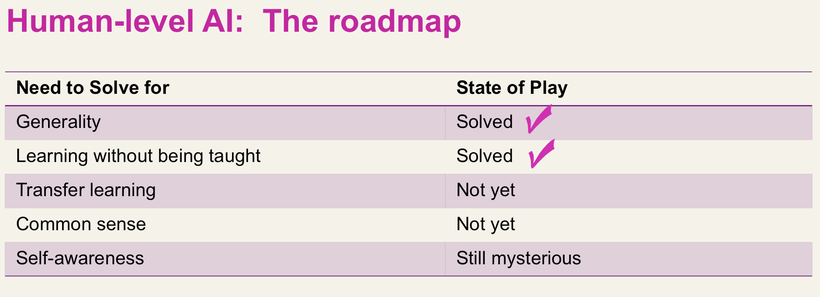

The article’s author –and AI researcher– George Zarkadakis stops just short of the classic trap of trying to define what exactly “human-level AI” might look like or other important but semantic philosophical quandaries about the nature of intelligence, and instead offers five broad categories of ability which an AI must master in order to be, roughly, “human-level” (outlined in depth in an another article of his):

The five signposts on the way to human-level AI according to Zarkadakis. Image via Huffington Post.

According to Zarkadakis’ analysis, AlphaGo represents one of the two areas in which AI science has unquestionably evolved, and notes as well that the next two on the list are problems that are already seeing progress in current AI research.

The final saving grace for humanity it seems is that human-level AI–again, strictly by Zarkadakis’ definition–will need to master something that we can’t even entirely define yet, some semblance of self-awareness. But the topic of “self awareness” is hotly contested among AI researchers with many believing that it is either impossible to attain, impossible to identify, or altogether irrelevant, and an interstellar paperclip factory doesn’t necessarily need to have a consciousness (or conscience) to turn all we hold dear into Clippy doppelgängers.

Leave A Comment