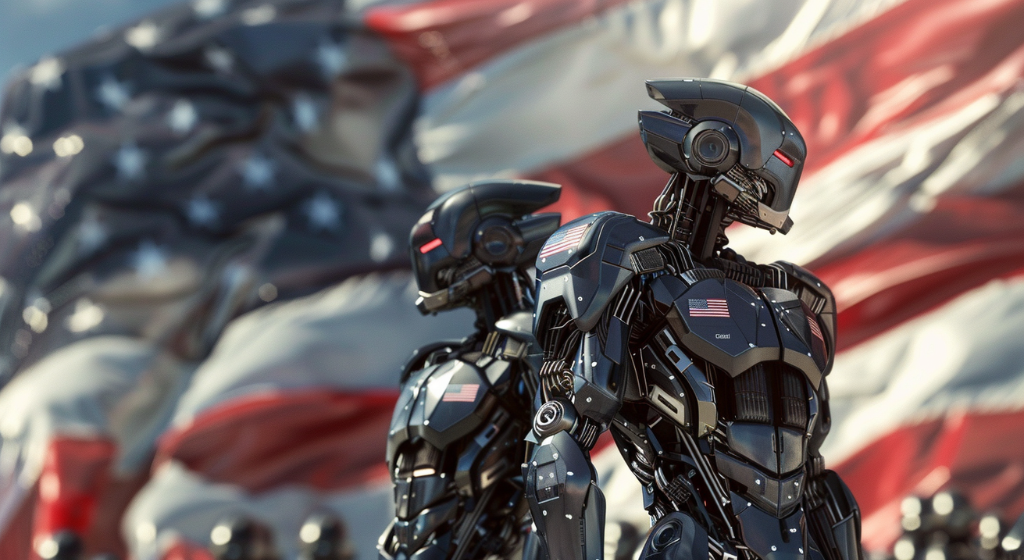

The Pentagon’s policies inadequately prevent the deployment of autonomous “killer robots,” which dehumanize targets and facilitate widespread killing, violating international human rights law, as highlighted in the report. (Source: Image by RR)

Autonomous Weapons Development Raises Legal and Ethical Questions

The Public Citizen report raises alarms about the ethical implications of integrating AI into weapon systems, particularly the development of autonomous weapons capable of making independent decisions about lethal force. Despite concerns about the dehumanizing effects and violations of international human rights law posed by these weapons, the Pentagon’s policies lack a clear prohibition on their deployment, leaving questions about accountability unresolved.

Critics argue that the Department of Defense’s directive on autonomous weapons falls short of providing robust guidelines for their development and use. While the directive emphasizes compliance with ethical principles, it fails to address the complex ethical and legal challenges inherent in autonomous weapons systems. As reported in salon.com, loopholes in the policy, such as the ability to waive senior review in cases of urgent military need, raise concerns about the potential for unchecked proliferation of these weapons.

The debate surrounding AI in warfare highlights broader questions about the role of technology in modern conflict and the moral responsibilities of decision-makers. As military contractors continue to develop autonomous weapons, geopolitical rivalries and economic interests further drive the advancement of these technologies, despite their ethical implications. The report suggests that the United States should pledge not to deploy autonomous weapons and support international efforts to negotiate a global treaty to that effect.

However, the rapid development of autonomous weapons worldwide poses significant challenges to such efforts. Within the U.S. alone, competition for autonomous weapons is intensifying among military contractors, leading to the development of unmanned tanks, submarines, and drones. As autonomous systems become more prevalent, the ethical debate surrounding their deployment grows increasingly urgent, highlighting the need for comprehensive legal and ethical frameworks to govern their use in warfare.

Ultimately, the ethical landscape of war remains fraught with uncertainty, requiring critical examination of the moral and legal frameworks governing the use of AI in warfare. As technology continues to evolve, it is imperative to address the ethical implications of autonomous weapons and ensure that decision-makers are held accountable for their deployment.

read more at salon.com

Leave A Comment