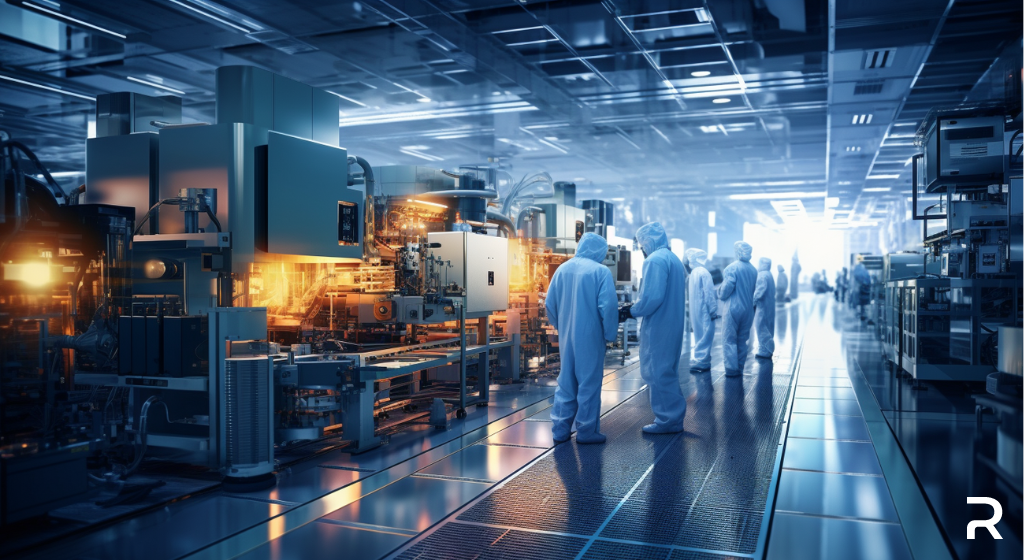

Meta’s foray into AI chip manufacturing will reduce its reliance on NVIDIA. (Source: Image by RR)

Meta’s AI Chip Deployment Aims for Cost Savings and Performance

Meta Platforms, the parent company of Facebook, is gearing up for a significant leap in its AI capabilities by deploying a second-generation custom chip in its data centers. This move aims to reduce Meta’s dependence on Nvidia, a dominant player in the AI chip market, and control the escalating costs associated with running AI workloads. With ambitions to integrate AI into Facebook, Instagram, WhatsApp, and hardware devices like Ray-Ban smart glasses, Meta has aggressively expanded its computing capacity and invested billions in specialized chips and data center modifications.

The deployment of this in-house chip is expected to bring substantial cost savings, potentially cutting hundreds of millions of dollars in annual energy costs and billions in chip purchasing costs at Meta’s scale. The company’s internally developed accelerators will work in tandem with off-the-shelf graphics processing units (GPUs), providing an optimal mix of performance and efficiency for Meta-specific workloads. Meta CEO Mark Zuckerberg revealed plans to amass approximately 350,000 flagship “H100” processors from Nvidia by the end of the year, alongside contributions from other suppliers, reaching a total compute capacity equivalent to 600,000 H100s.

This chip deployment marks a positive turn for Meta’s in-house AI silicon project, following the discontinuation of its first iteration in 2022 in favor of purchasing Nvidia GPUs. While this new chip, internally referred to as “Artemis,” specializes in inference tasks, Meta is also working on a more ambitious chip that can perform both training and inference, similar to GPUs. This strategic shift towards in-house chips could increase efficiency in processing Meta’s recommendation models, potentially offering substantial cost and energy savings in the AI space.

Read more at Reuters.com

Leave A Comment