Bagging and Boosting

Or How to Stop Worrying & Love Machine Learning

The headline does not concern groceries. Neither checking out nor shoplifting dinner is involved. However, when it comes to machine learning, both terms are useful. First, let’s start with saying bagging and boosting are similar in that they are both ensemble methods. This is where a set of weak learners are combined to create a strong learner that performs better than a single one.

So it’s similar to the analogy of a football team pulling together, or a team of horses linked to pull bigger loads.

Ensembles start with a basic learning algorithm to train multiple models. When added to a bigger group of methods called multiclassifiers, it’s like turning a hoard of computer warriors loose to fixate on one problem/enemy. Now add that muticlassifier to other multiclassifiers and this is known as Stacking. KD Nuggets author, xristicia, can fill you in further about Stacking with an article entitled Dream Team combining classifiers.

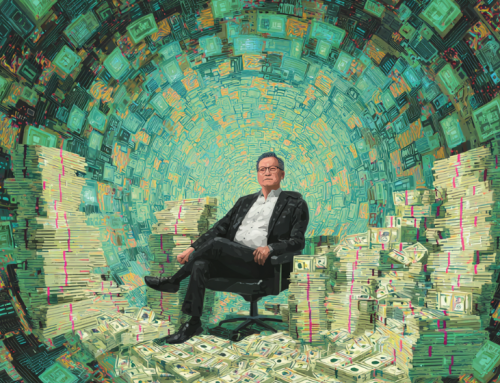

The above examples show bagging and boosting of algorithms and how weighted data is affected. The depth of this particular event in daily computation and machine learning far outstrips the average person’s understanding of this subject. If you need to become more familiar with these two ensemble methods, follow the links within the article above and the direct link below.

Leave A Comment